Tag archives: fundamental physics

Getting a fix on quantum computations

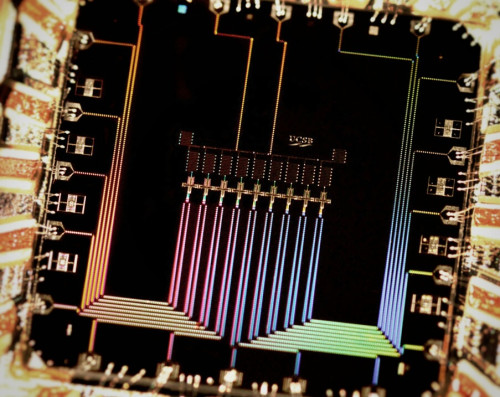

Bit of choice: A photograph of the nine superconducting qubit device developed by the Martinis group at the University of California, Santa Barbara, where, for the first time, the qubits are able to detect and effectively protect each other from bit errors. (Courtesy: Julian Kelly/Martinis group)

By Tushna Commissariat in New York City, US

Although the APS March meeting finished last Friday and I am now in New York visiting a few more labs and physicists in the city (more on that later), I am still playing catch-up, thanks to the vast number of interesting talks at the conference. One of the most interesting sessions of last week, and a pretty popular one at that, was based on “20 years of quantum error correction” and I went along to the opening talk by physicist John Preskill of the California Institute of Technology. I had the chance to catch up with Preskill after his talk and we discussed just why he thinks that we are not too far away from a true quantum revolution.

Just in case you haven’t come across the subject already, quantum error correction is the science of protecting quantum information (or qubits) from errors that would occur as the information is influenced by the environment and other sorts of quantum noise, causing it to “decohere” and lose its quantum state. Although it may seem premature that scientists have been working on this problem for nearly two decades when an actual quantum computer has yet to be built, we know that we must account for such errors if our quantum computers are ever to succeed. It will be essential if we want to achieve fault-tolerant quantum computation that can deal with all sorts of noise within the system, as well as faults in the hardware (such as a faulty gate) or even a measurement.

Over the past 20 years, theoretical work in the field has made scientists confident that quantum computing of the future will be scalable. Preskill says that “it’s exciting because the experimentalists are taking it quite seriously now”, while initially the interest was mainly theoretical. Previously, scientists would artificially create the noise in the quantum systems that they would correct but now actual quantum computations can be fixed. Indeed, Preskill says that one of the key things that has really moved quantum error correction along in the past few years is the concentrated improvement of the hardware used, i.e. better gates with multiple qubits being processed simultaneously.